For the past couple of years, I’ve been saying that if AI wasn’t sucking up all the oxygen in the room, the most important innovation in martech would be what’s been happening at the data layer.

Cloud data warehouses/lakehouses pulled data from applications across the enterprise — from every department, not just marketing — unlocking greater cross-org analytics and insights. Then, with the rise of “reverse ETL,” those applications started pulling data from the warehouse. We could do more than analyze that data. We could operationalize it, activate it. In marketing, this triggered the great composable CDP revolution.

This data fluidity has arrived at the exact moment we need it most.

Because AI needs data like fire needs air.

In marketing, AI agents crave context — the full picture of who a customer is, what’s happening right now, what’s been tried before, and why decisions were made the way they were. They want behavioral signals, campaign performance, financial data, content metadata, approval histories. They want it all, unified, with consistent definitions, accessible without the integration equivalent of a game of Twister.

Platforms such as Databricks, Snowflake, and BigQuery make that achievable, across structured, unstructured, and streaming data of every kind. Any app or agent can plug into that layer, harness it, and contribute to it. And increasingly, apps and agents can run natively on those platforms too, operating securely on the same plane where the data resides.

When you step back, this starts to look very different than martech stacks as we’ve known them. What is this new martech architecture? And what does it make possible?

The New Martech “Stack” for the AI Age

Back in November, I partnered with Databricks to explore these questions in a research report that we released this week, The New Martech “Stack” for the AI Age.

They connected me with a number of their customers who were already pushing the envelope in this direction, including Meagen Eisenberg, CMO at Samsara; Bryce Peake, former VP of marketing decision sciences at Domino’s; Kumar Ram, VP/global head of marketing data sciences at HP; and Chris Wissing, chief product officer at Epsilon. I also spent time with Databricks’ CMO, Rick Schultz; their own head of martech, Elizabeth Dobbs; and VP of engineering Tasso Argyros, previously the founder and CEO of ActionIQ.

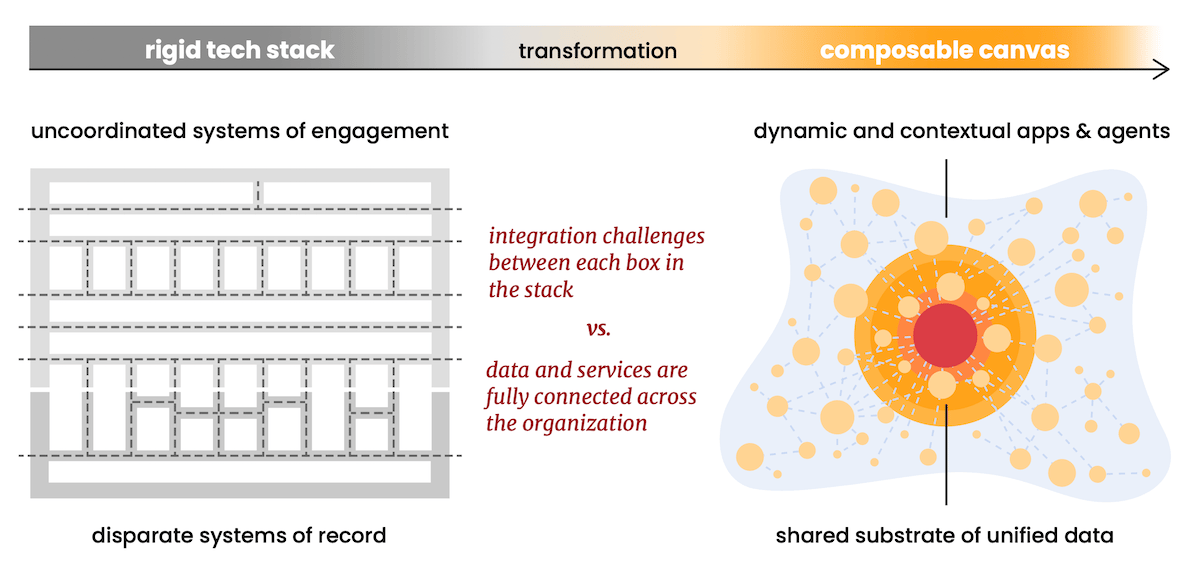

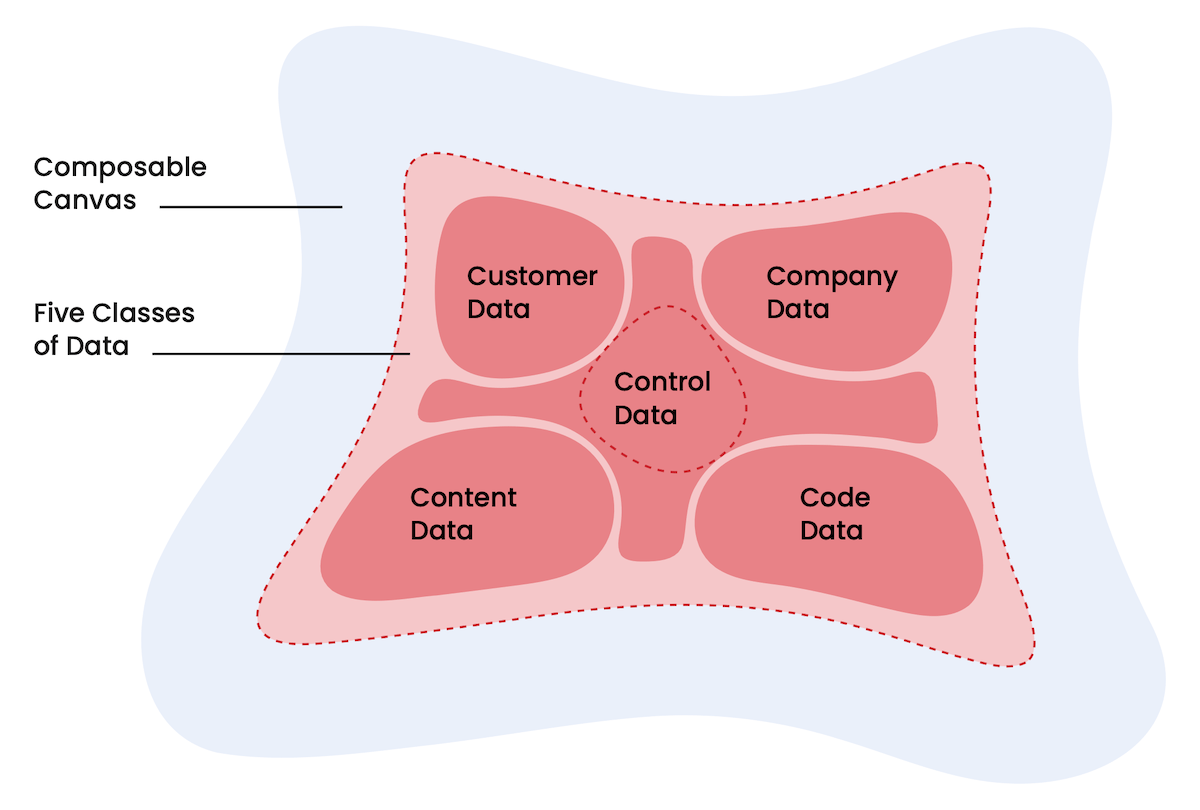

What emerged from those conversations was a picture of something that was less of a “stack” — that Tetris arrangement of stacked platforms and apps we all know so well, stitched together by a patchwork of plumbing — and more of a composable canvas, where everything was now adjacent and adaptable.

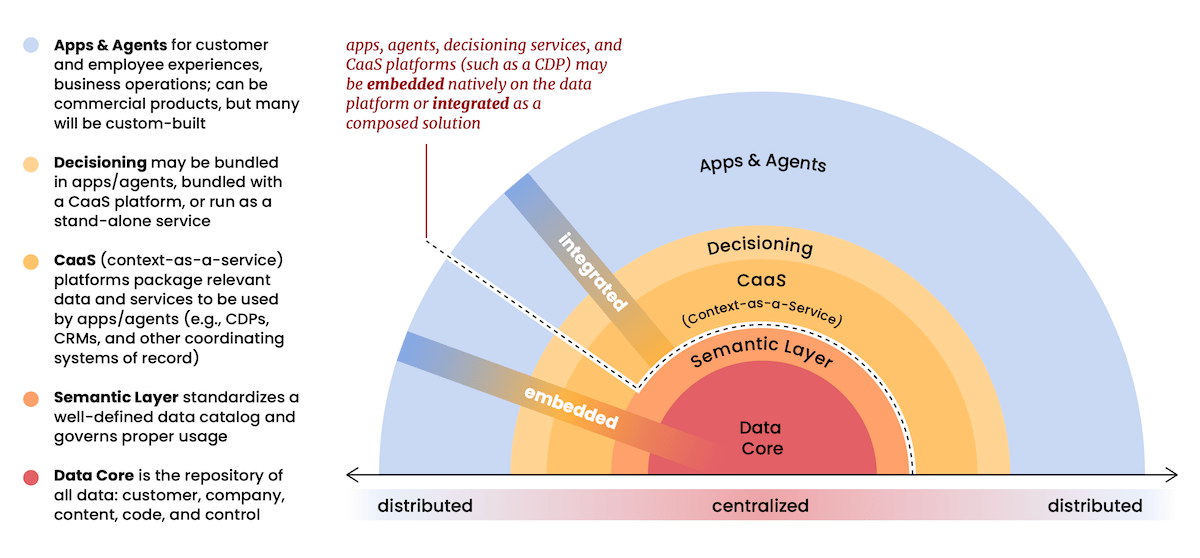

This composable canvas isn’t a free-for-all. The universal data platform serves as the app-agnostic center of gravity and a central data governance authority. Permissions, access controls, audit trails, data lineage, and privacy guardrails can all be enforced consistently at the platform level, regardless of which app or agent is touching the data. And a semantic layer wrapped around this data core ensures that every app and agent is working from the same definitions, metrics, and business logic that make it coherent.

Between that data core and an expanding canvas of apps and agents — commercial and custom-built — there’s a role for coordinating platforms that assemble the right data and functionality for a specific user (agent or human), at a specific moment, in the context of that domain of expertise. In marketing, that domain might be ad campaigns, social media engagement, account-based orchestration, ecommerce personalization.

This work is known as “context engineering” for AI agents, giving them what they need to be efficient and effective. Currently, most context engineering is done from scratch by AI developers. It’s complex, technical, and difficult to maintain.

However, I believe a lot of context engineering work can be productized by domain-specific platforms, turning it into a service that any app or agent can tap into. As I wrote last month (here and here), I believe such context-as-a-service (CaaS) platforms are an evolutionary path for martech SaaS platforms such as CDPs and CRMs.

They become less “systems of record” — since the ground truth ultimately lives in the data core — and more “systems of context” that make that data actionable in a domain.

While decisioning is likely embedded in these systems of context, as is currently the case with many martech SaaS platforms, it could be more powerful as an independent service — a consumer of context, rather than a provider. Such decisioning engines could optimize for outcomes that span multiple domains.

That stable center of the composable canvas — data, semantics, context, and decisioning — enables an expansive and dynamic ecosystem of apps and agents to thrive. They can proliferate into the thousands: commercial and custom, persistent and ephemeral, built by vendors and vibe coders alike. This is the new shape of martech in the AI age: not a rigid stack, but a governed core with a fluid, fast-changing frontier around it.

A Grand Unifying Theory of Martech

There were two realizations in this project that blew my mind.

The first was recognizing the full scope of what could live in this universal data layer. In martech, our natural instinct is to think of customer data — bringing it together from all the disparate sources in which it’s generated. The classic mission of a CDP, but baked into your infrastructure with far less integration gymnastics.

That’s valuable, but it’s only one dimension of the marketing machine.

There’s an enormous amount of data about the business and its operations — company data — that has been hard for marketers to tap programmatically. This includes data about the structure and performance of marketing campaigns and programs, but also data from across the organization: inventory, logistics, finances, resource allocation, service tickets, product roadmaps, production schedules, sales pipelines, decision traces. The operational reality of the business that marketing historically had to beg other departments to share.

Aligned with customer data, this unlocks a more complete picture of the business of marketing that AI agents can actually act on, not just analyze.

Then there’s content. It’s the data that marketers are least used to thinking of as data — creative, unstructured, and stubbornly resistant to being treated like a database entry. But generative AI changes that relationship. When brand guidelines, creative assets, and performance metadata live on the same common plane as everything else, content stops being something you retrieve and starts being something you reason over.

But an even bigger conceptual leap is treating code as data.

AI models and their weights. Prompts and prompt templates. Agent skill definitions. Source code for custom applications. Configurations for agents and automations. They’re all artifacts that can be versioned, governed, queried, and updated like any other data asset. When your marketing automation is a prompt chain rather than a flowchart, the prompt is the logic. And logic, it turns out, is just data.

Finally, there’s control data — the governance layer that makes all of the above trustworthy. Policies, permissions, business rules, guardrails. The definitions in your semantic layer. The logic that keeps AI and humans alike operating within acceptable bounds. The very scaffolding of the universal data layer, it turns out, is data too.

I believe this is the Grand Unifying Theory of Martech: everything is data. Martech no longer merely sits on data. It actually is data.

Customer profiles, marketing campaigns, brand assets, the AI agent writing emails, even the governance frameworks keeping everything in check — they’re all data, all adjacent, and all interoperable.

Making the Math of Integration Approach Zero

The second mind-blowing moment in this research hit me personally.

I’ve spent much of the past decade working to solve the challenges of integration that have plagued the martech industry. Point-to-point integrations were often the richest, but the math was brutal. The complexity of it all grew exponentially — O(n2) — as every product theoretically needed an integration to every other product in your stack. For most, it wasn’t worth it. So most things didn’t get integrated to each other. Instead, the silos multiplied.

Hub-and-spoke architectures emerged in response to that challenge, giving rise to SaaS platform ecosystems. HubSpot’s App Marketplace. Salesforce’s AppExchange. Shopify’s App Store. Partners in those ecosystems would build integrations to the platform. Customers who then connected those products to the platform got the benefit of cross-system orchestration with only linear complexity — O(n) — one integration for each product rather than one for every possible pair.

iPaaS products, such as Make, n8n, Workato, and Zapier, were another kind of hub-and-spoke platform. More of a neutral ground for cross-platform integrations, but the same fundamental model.

But the hub then became the bottleneck. It constrained what data could be sent or retrieved. And you were never really sharing data so much as moving it — copying it between systems, maintaining parallel versions of truth that drifted apart the moment something changed upstream.

But what if the data never had to move at all?

When apps and agents operate on a shared/native data substrate — when they’re not exchanging data but simply accessing the same data — the integration math doesn’t just improve. It collapses. Complexity approaches O(log n), as each app or agent attaches through light configuration more than an engineered integration.

The curve that once shot toward the sky bends almost flat.

I’ve spent years building bridges between data islands. Negotiating what fields could cross. Watching syncs fail. Untangling the consequences of three systems that each believed they held the authoritative version of a customer record.

This substrate architecture of a unified data platform doesn’t build better bridges. It drains the water between the islands and lets everything stand on the same ground.

The Arc of Martech Bends Toward Convergence

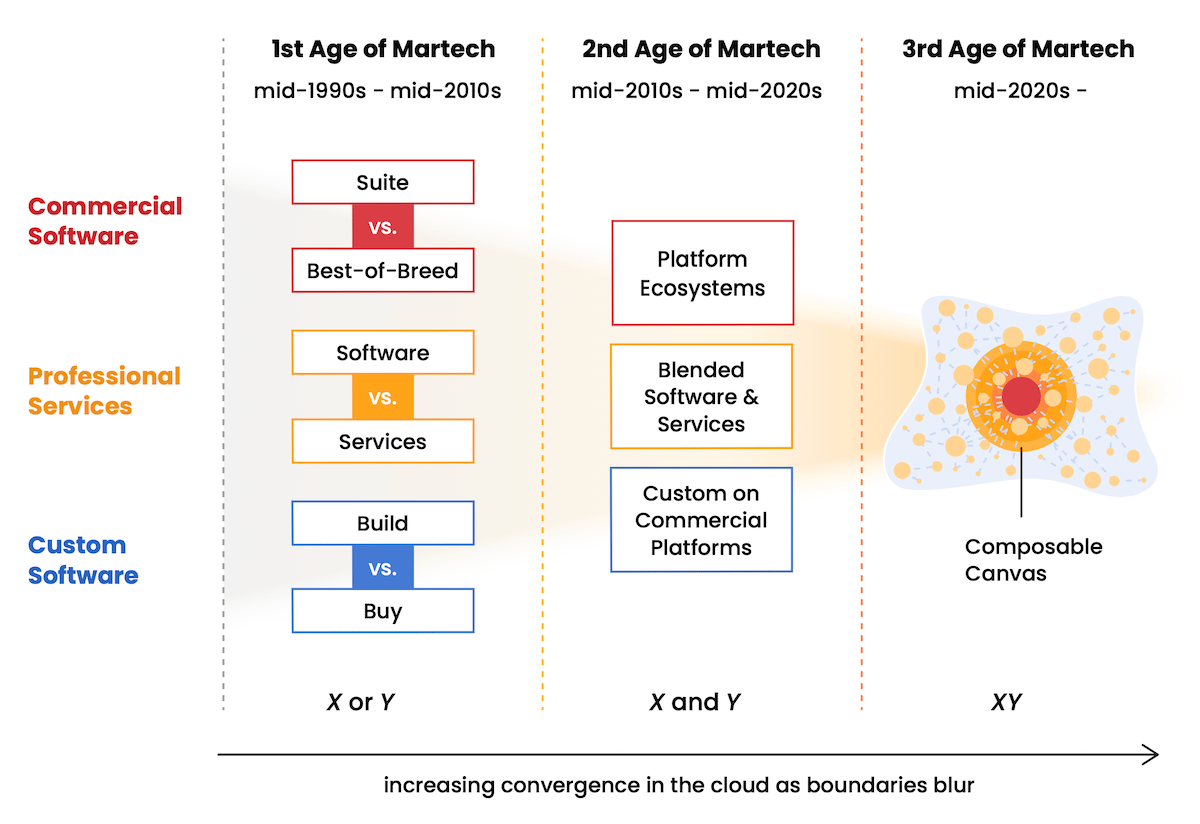

Martech has been on a multi-decade journey toward convergence. We started in an age of binary choices: suite vs. best-of-breed, software vs. services, build vs. buy. Chafing against those constraints, the industry advanced into the platform age: integrated hub-and-spoke ecosystems, blended models of software and services, and buy-and-build customization on top of commercial platforms.

Now we’re entering a third age of accelerated convergence where those old distinctions dissolve almost completely.

Notice that I say convergence, not consolidation. The commercial martech landscape remains robust with over 15,000 solutions. While AI is disrupting classic SaaS, waves of AI-native products keep entering the space anew. The infrastructure of hyperscalers, data platforms, and AI frontier labs may be consolidated, but the apps and agents on top of them are more diverse than ever.

And then there’s the hypertail, the blossoming field of apps and agents that companies are spinning up on their own, empowered by agentic coding and vibe coding tools, that dwarfs the long tail of the commercial landscape.

I remain confident that the future of martech will have more software, not less. But the stack metaphor of the past two decades is creaking with age, slow to adapt. The rigid boxes. The vertical Jenga tower. The piles of partial integrations.

The composable canvas is what I believe comes next.

May the “stack” be ever in your favor,

Scott

P.S. I’ve only discussed a fraction of what’s covered in The New Martech “Stack” for the AI Age. In the full report, I dig into the hypertail, context graphs, dynamic partnerships, a complete mapping of existing martech products into this architecture, and the design principles that move you to this model in an evolutionary, step-wise fashion. You can download a free copy here.